When dealing with firmware images we often find large blobs of binary data which look totally random. The key question then is whether they are encrypted or compressed and which algorithm was used?

During Veles development I noticed that for most compression algorithms it is relatively easy to recognize them visually, which is the main subject of this article.

Visual artifacts

One might expect that compressed data should look the same as encrypted on statistical tests, but this turns out not to be true for most compression algorithms, even when looking at data stream without headers. Although there are already some good articles about this property from the /dev/ttyS0 team (the authors of binwalk; first art, second art), I decided to also discuss this topic extending it with recognition between different compressions and adding some fancy visualizations from Veles (if you are interested in how they work you can read this article).

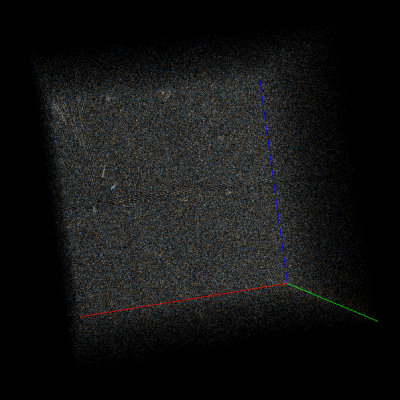

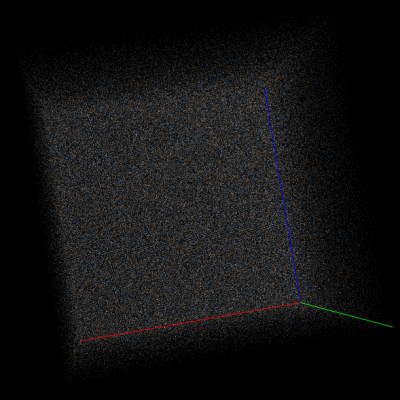

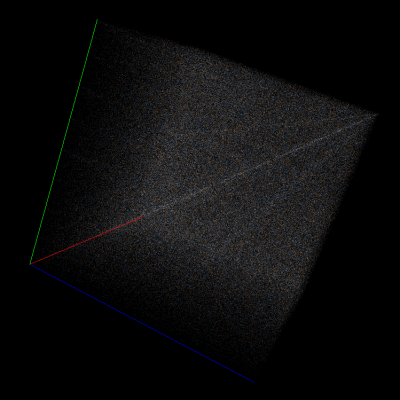

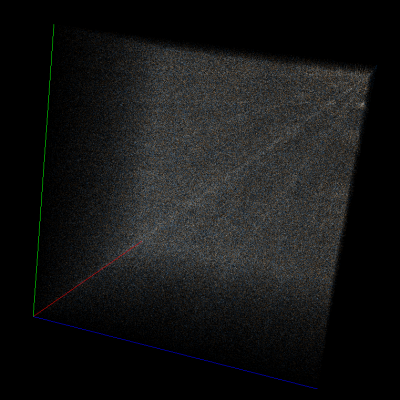

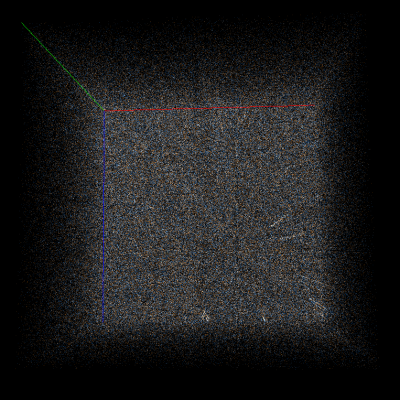

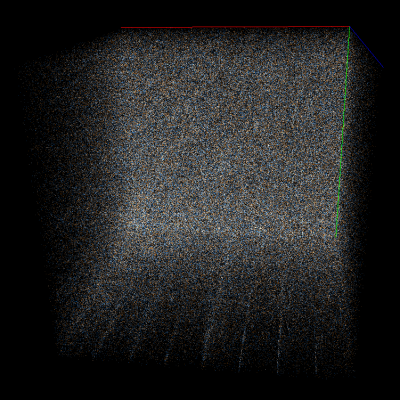

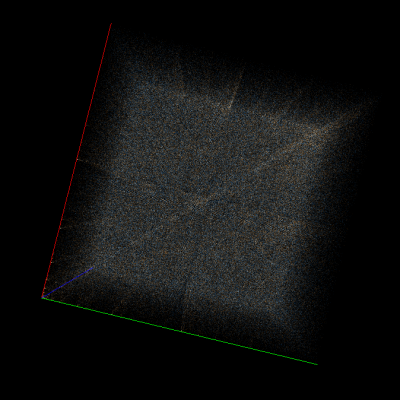

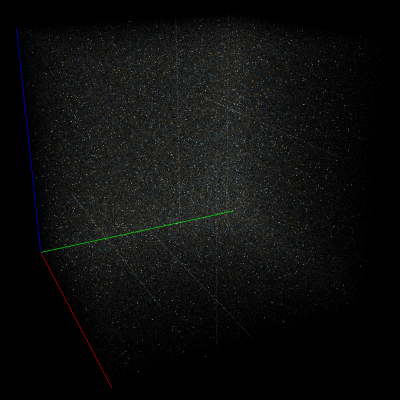

For example, consider Doom1.WAD compressed using deflate (wrapped in gzip format) in Veles trigram view and scroll to a random part of it (to exclude headers). At first glance the data may look totally random, but if you start rotating the cube you’ll notice many subtle artifacts. Let’s take a look at them with AES-CBC-encrypted data for comparison:

Right: Part of Doom1.WAD encrypted with AES-128-CBC.

As you can see, strong encryption seems to be indistinguishable from random data, but compression leaves many traces on a trigram view.

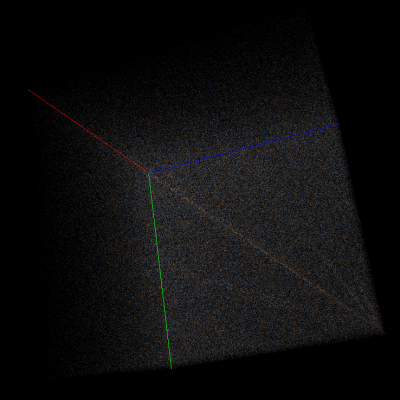

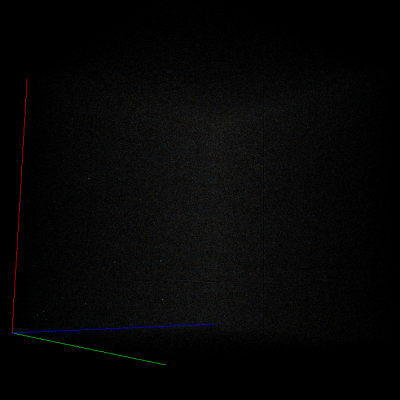

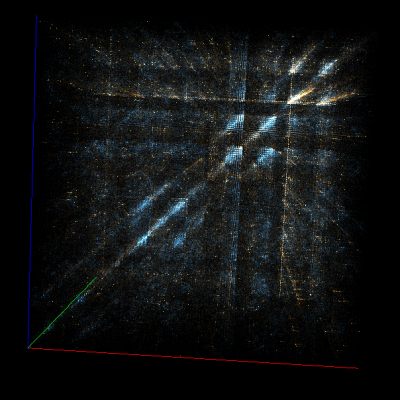

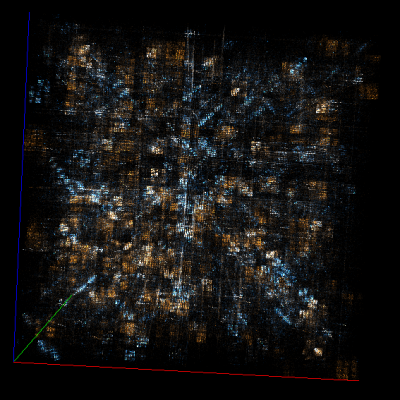

A small catalog of compression fingerprints

I have gathered and organized various artifacts from some popular compression algorithms below. This may prove very useful if you find some compressed data and want to identify the algorithm.

deflate

bzip2

Rar

Rar5 seems to have the same properties as Rar4, but they are a bit harder to spot. Both LZMA and LZMA2 seem to be indistinguishable from random data.

Poor encryption

Another feature which can be easily spotted on trigram visualization is poor encryption.

Let’s see some images (again, on the parts of Doom1.WAD):

AES-128-ECB

Xor with a random key

Summary

Although it seems that all compression algorithms I tested (excluding LZMA) can be easily distinguished from a decent encryption, it turns out that identification of the specific algorithm is much harder on small files because not all of the artifacts appear. We did not carry out tests on any large corpus of inputs, but it seems that you need to compress at least 10MB of data to make the compression algorithm look different from others.

If you’d like to see some more graphics for other compression algorithms please leave a comment, and we’ll update the article accordingly.

Like to see a TrueCrypt volume using these techniques.